Textual exercise

Overview

Conducting a text exercise consists of 3 steps:

Instructor prepares exercise: Instructor creates and configures the text exercise in Artemis.

Student solves exercise: Student works on the exercise and submits the solution.

Tutor assesses submissions: Tutor reviews the submitted exercises and creates results for the students.

Setup

The following sections describe the supported features and the process of creating a new text exercise.

Open

.

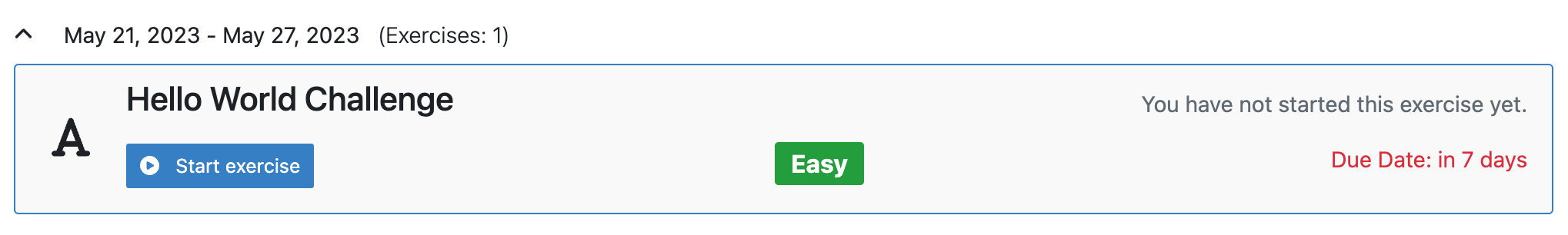

.Navigate into Exercises of your preferred course.

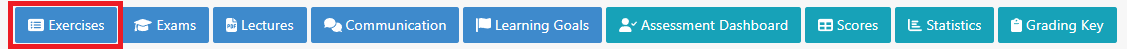

Create Text Exercise

Click on Create new text exercise.

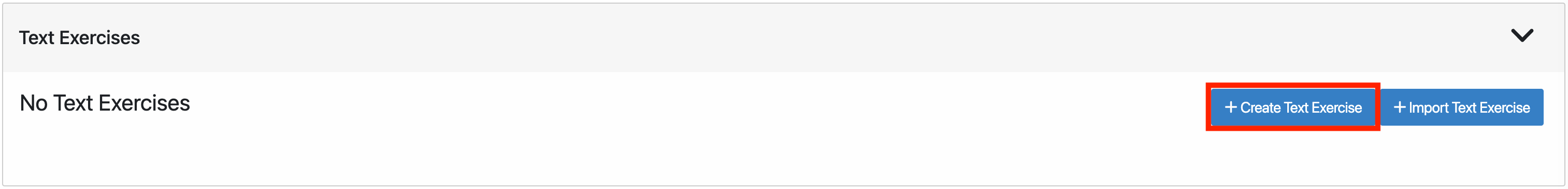

The following screenshot illustrates the first section of the form. It consists of:

Title: Title of an exercise.

Categories: Category of an exercise.

Difficulty: Difficulty of an exercise. (No Level, Easy, Medium or Hard).

Mode: Solving mode of an exercise. This cannot be changed afterward (Individual or Team).

Release Date: Date after which the exercise is released to the students.

Start Date: Date after which students can start the exercise.

Due Date: Date till when students can work on the exercise.

Assessment Due Date: Date after which students can view the feedback of the assessments from the instructors.

Inclusion in course score calculation: Option that determines whether or not to include exercise in course score calculation.

Points: Total points of an exercise.

Bonus Points: Bonus points for an exercise.

Automatic assessment suggestions enabled: When enabled, Artemis tries to automatically suggest assessments for text blocks based on previously graded submissions for this exercise using the Athena service.

Note

Fields marked with red are mandatory to be filled.

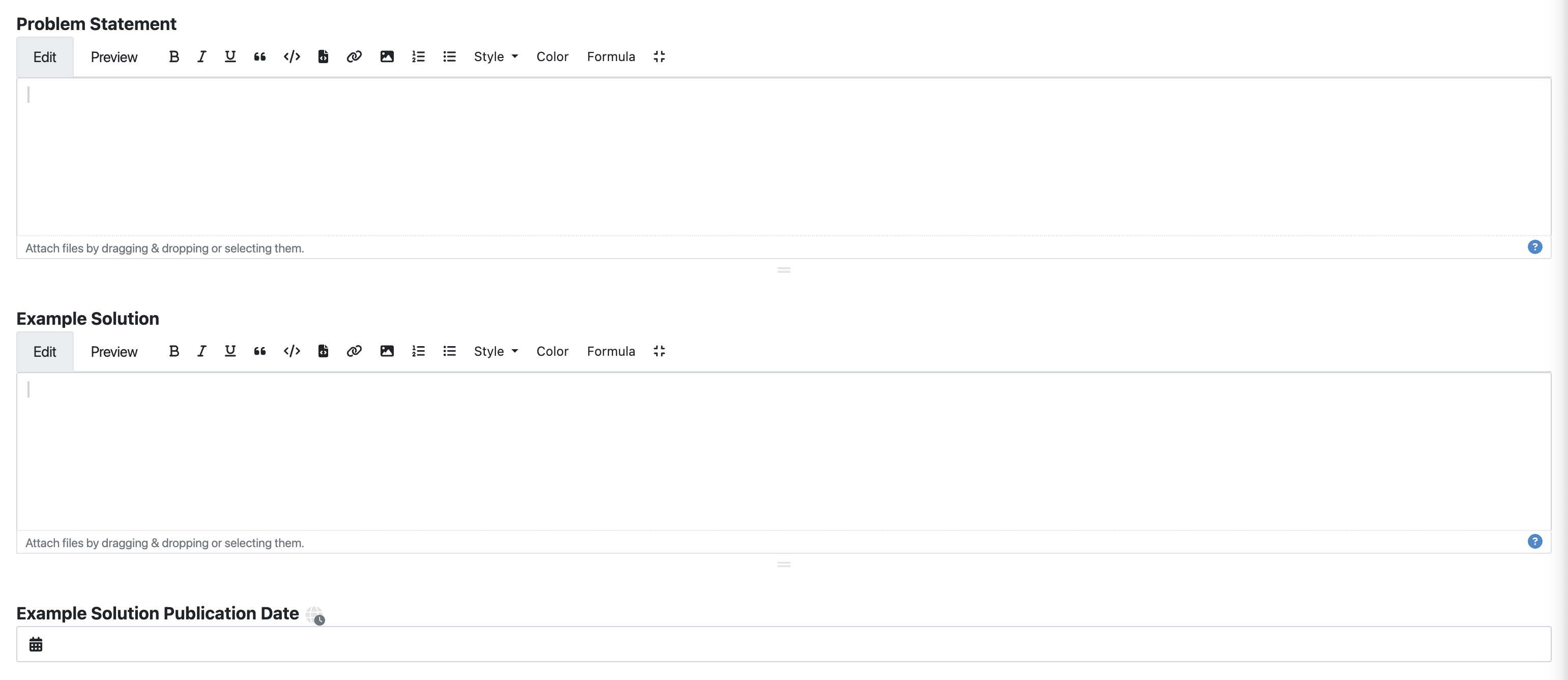

The following screenshot illustrates the second section of the form. It consists of:

Problem Statement: The task description of the exercise as seen by students.

Example Solution: Example solution of an exercise.

Example Solution Publication Date: Date after which the example solution is accessible for students. If you leave this field empty, the solution will only be published to tutors.

Note

If you are not clear about any of the fields, you can access additional hints by hovering over the ![]() icon for many of them.

icon for many of them.

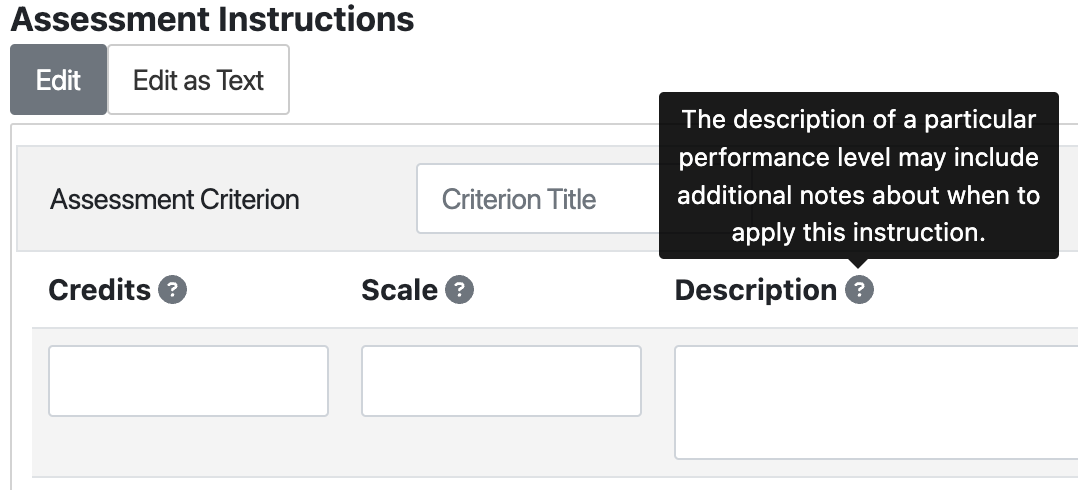

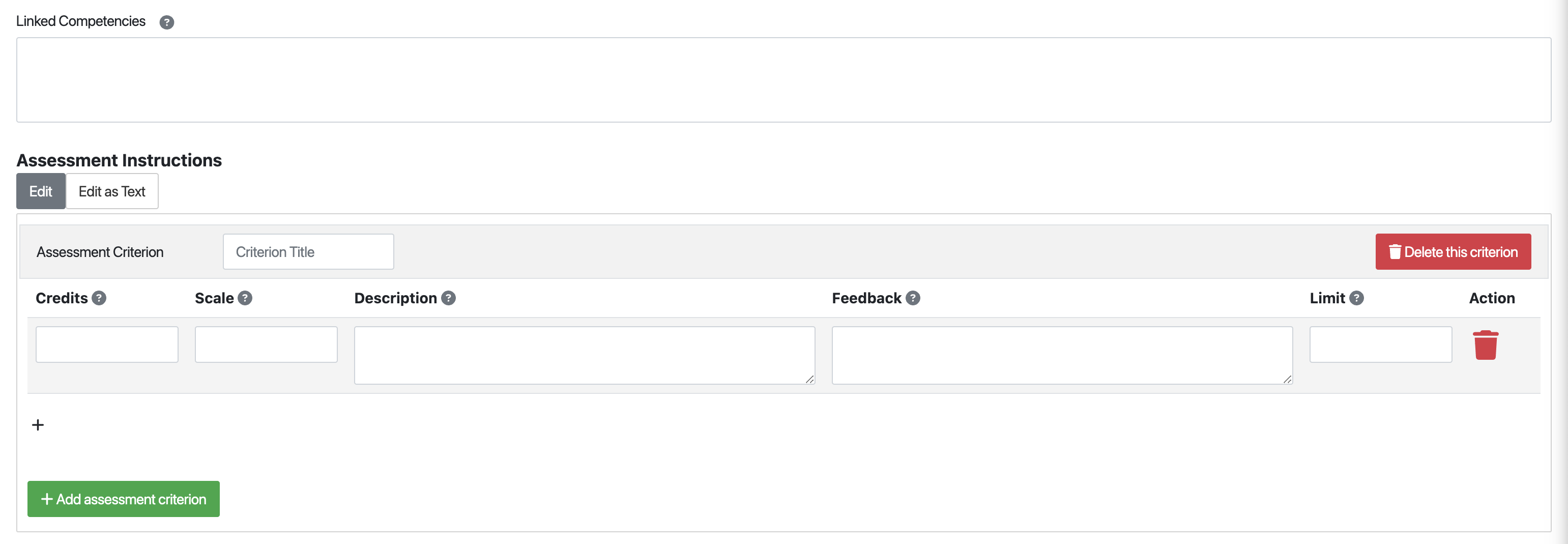

The following screenshot illustrates the last section of the form:

Linked Competencies: In case instructors created competencies, they can link them to the exercise here. See Adaptive Learning for more information.

Assessment Instructions: Assessment instructions (comparable to grading rubrics) simplify the grading process. They include predefined feedback and points. Reviewers can drag and drop a suitable instruction to the text element to apply it during the assessment. The Credits specify the score of the instruction. The Scale describes the performance level of the instruction (e.g., excellent, good, average, poor). The Description may include additional notes about when to apply this instruction. Feedback is an explanatory text for the students to understand their performance level better. The Limit specifies how many times the score of this instruction may be included in the final score.

Once you are done defining the schema of an exercise, you can now create an exercise by clicking on the  button.

button.

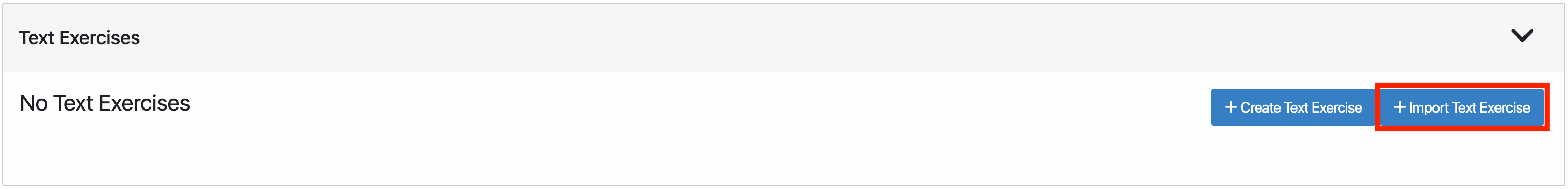

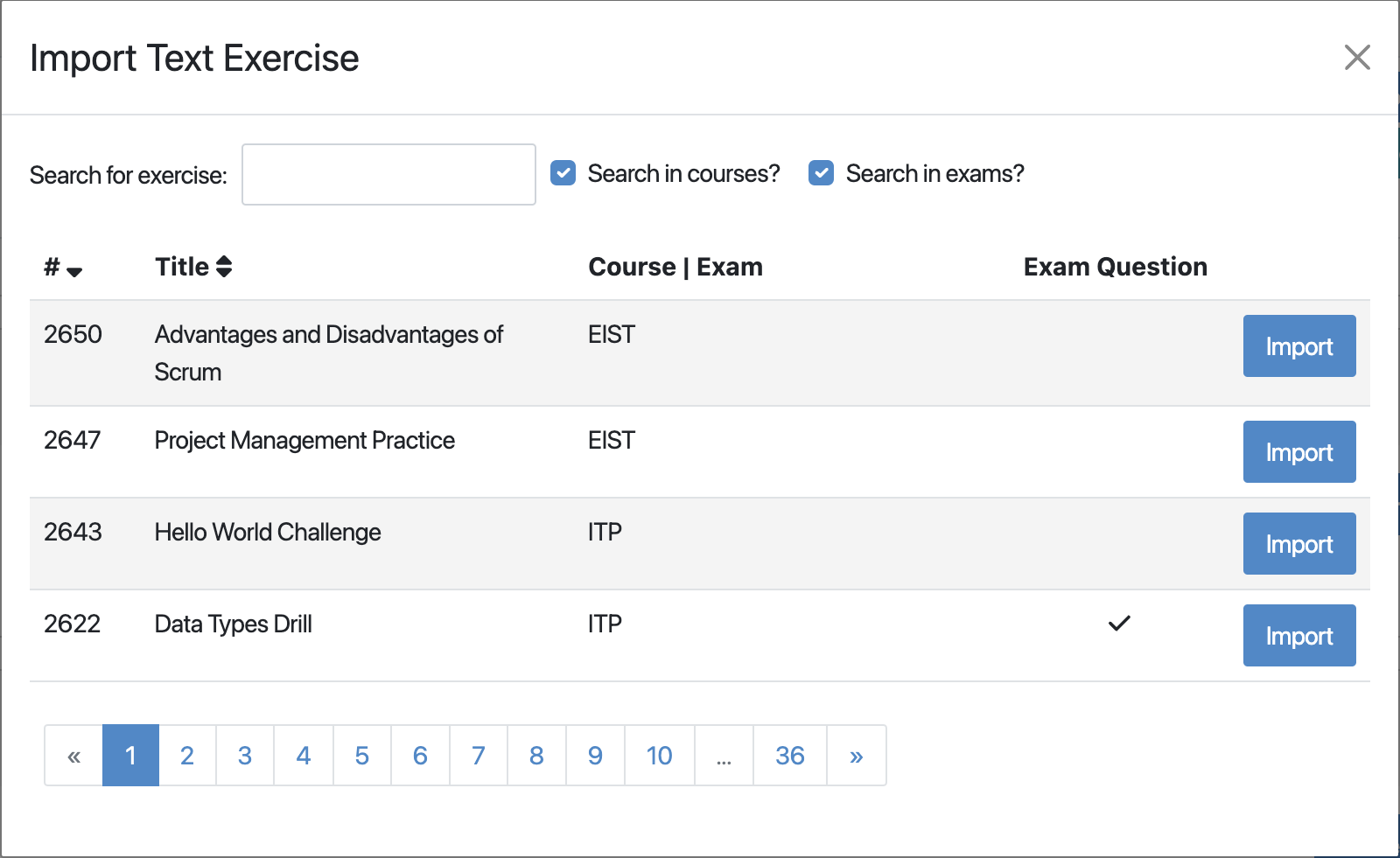

Import Text Exercise

Alternatively, you can also import text exercise from the existing one by clicking on Import Text Exercise.

An import modal will prompt up, where you will have the option to select and import previous text exercises from the list by clicking on the Import button.

Once you import one of the exercises, you will then be redirected to a form that is similar to Create text exercise form with all the fields filled from the imported exercise. You can now modify the fields as necessary to create a text exercise.

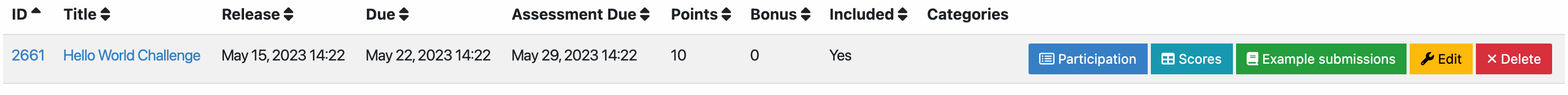

Result

Click the

button of the text exercise and adapt the interactive problem statement. There you can also set release and due dates.

button of the text exercise and adapt the interactive problem statement. There you can also set release and due dates.Click the

button to see the scores achieved by the students.

button to see the scores achieved by the students.Click the

button to see the list of students who participated in the exercise.

button to see the list of students who participated in the exercise.Click the

button to modify/add an example submission of the exercise.

button to modify/add an example submission of the exercise.You can get an overview of the exercise by clicking on the title.

Student Submission

When the exercise is released students can work on the exercise.

Once they start the exercise, they will now have the option to work on it in an online text editor by clicking on the

button.

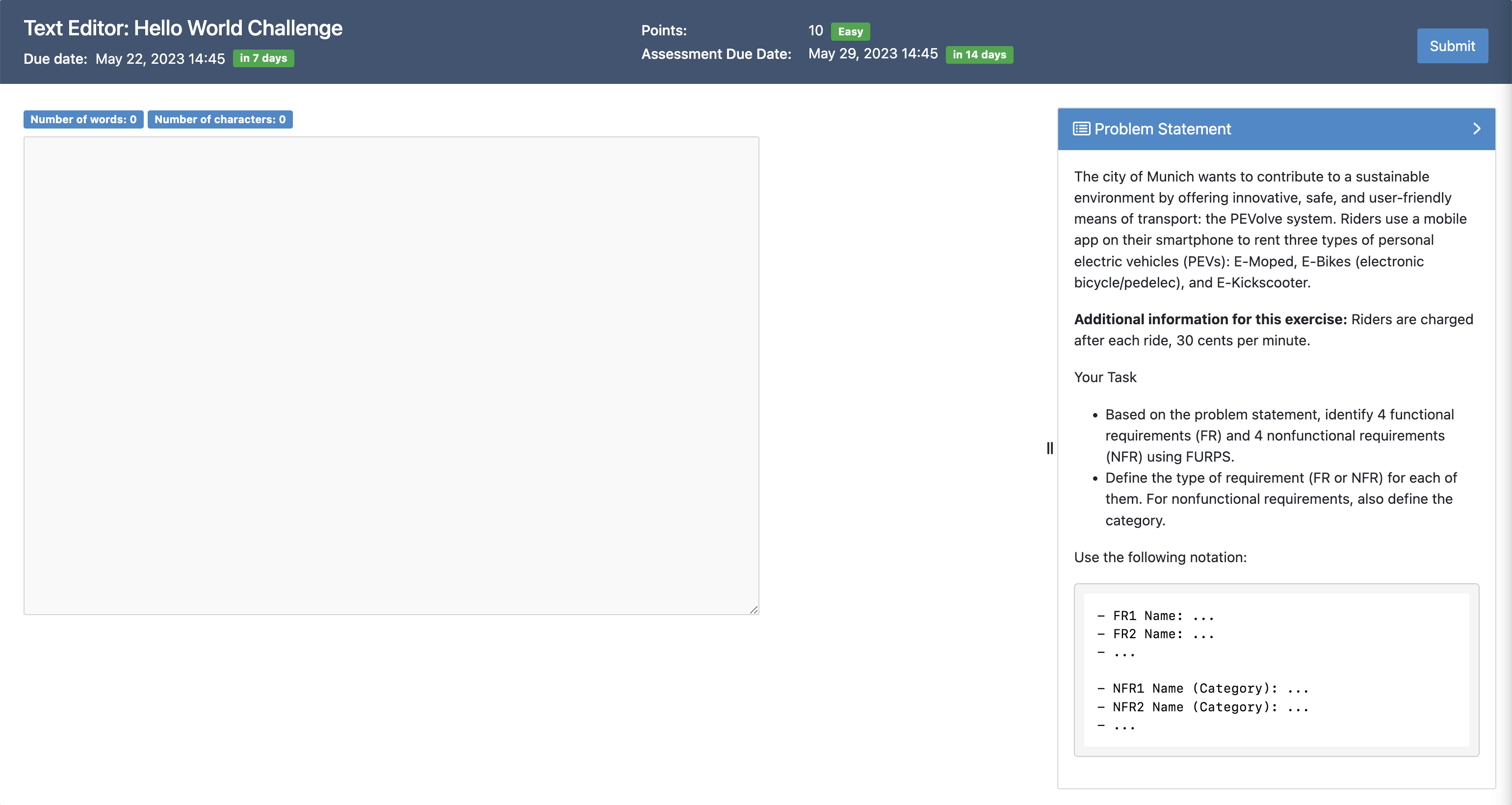

button.The screenshot below depicts the exercise interface for students. They can read the problem statement on the right and fill in their solution in the textbox on the left. To submit, you need to click on the

button on the top right.

button on the top right.

Assessment

When the due date is over you can assess the submissions. Text exercise

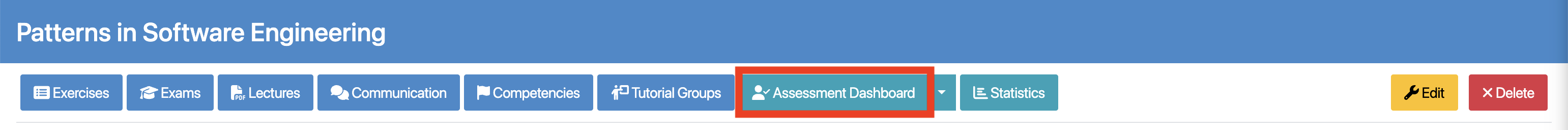

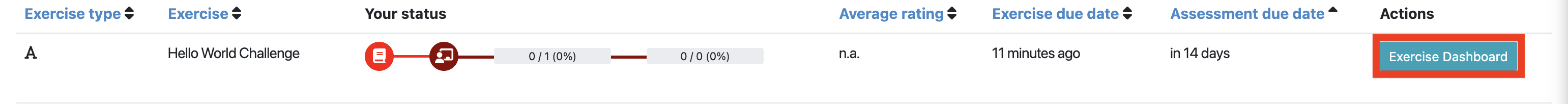

To assess the submissions, first click on Assessment Dashboard.

Then click on Exercise Dashboard of the text exercise.

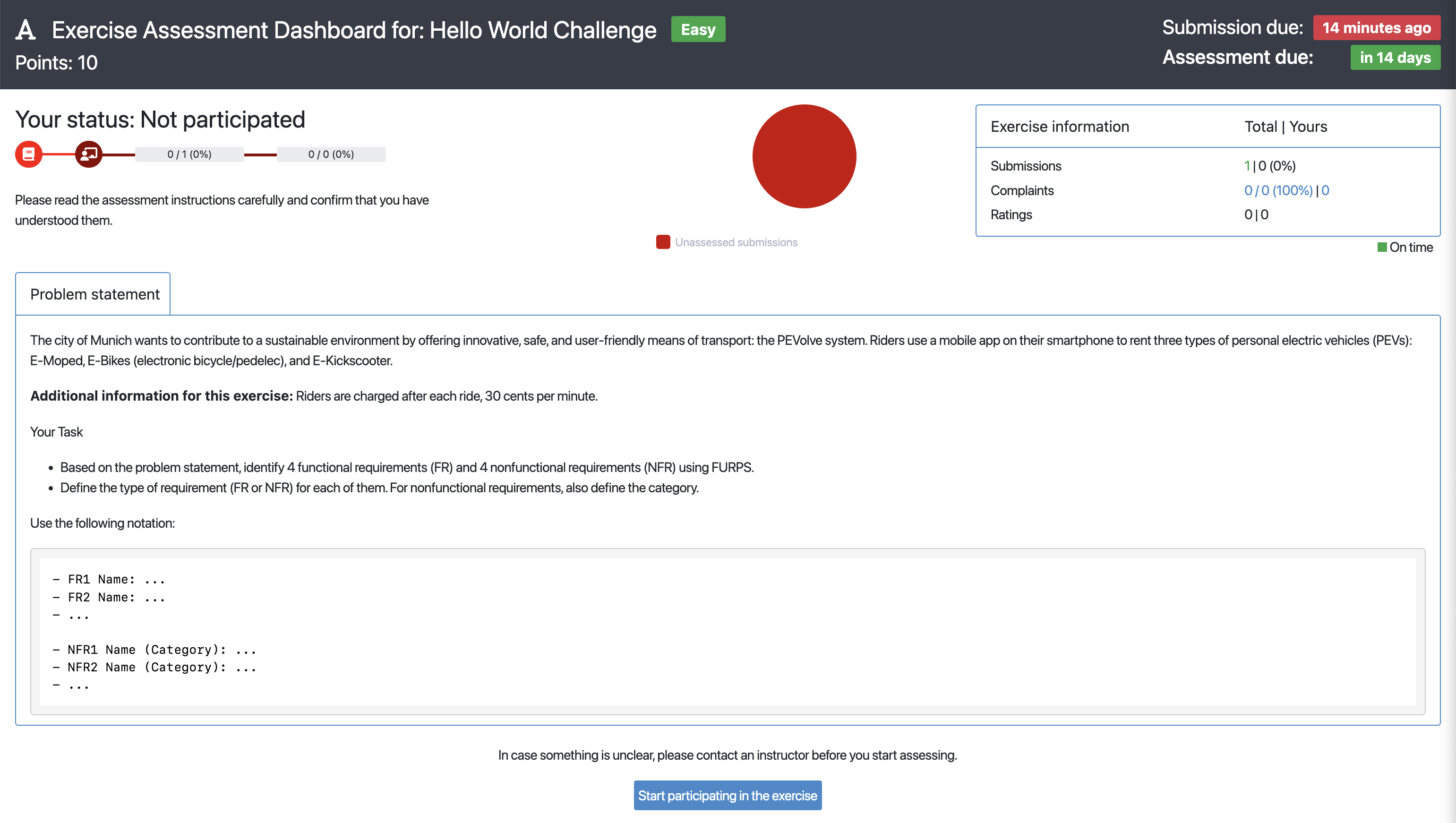

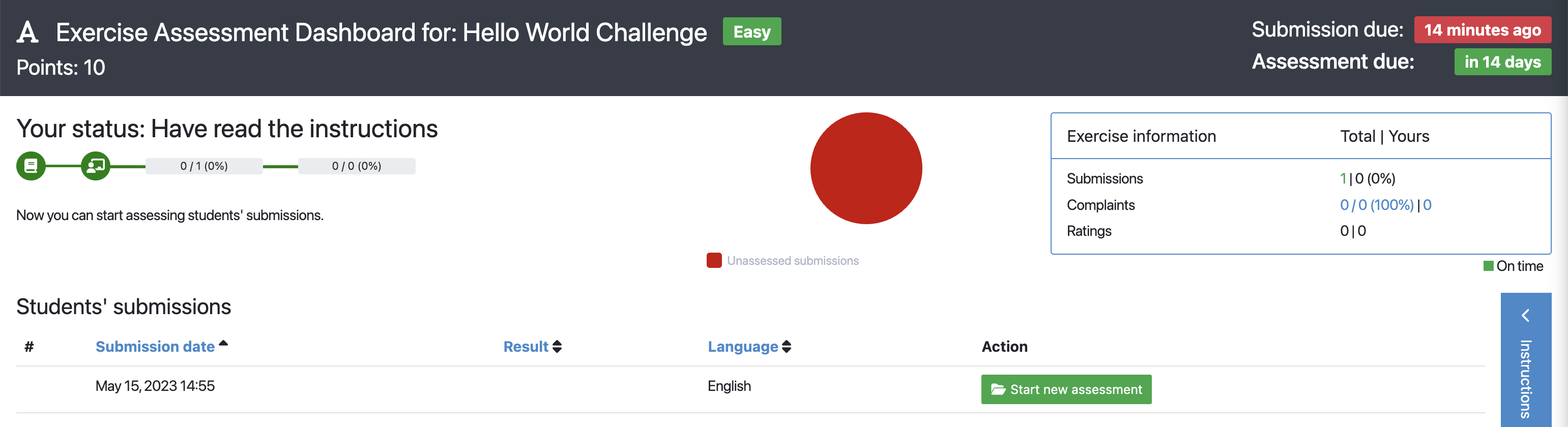

You will then be redirected to Exercise Assessment Dashboard.

In case you have not assessed a submission of this exercise before, you will get shown the problem statement and a summary of assessment instructions. To learn more about this feature, take a look at Artemis’ Integrated Training Process. Once you know what the exercise is about, you can click on the

button.

button.In case unassessed submissions are available, you can click on the

button. You will then be redirected to the assessment page where you will be able to assess the submission of a random student.

button. You will then be redirected to the assessment page where you will be able to assess the submission of a random student.

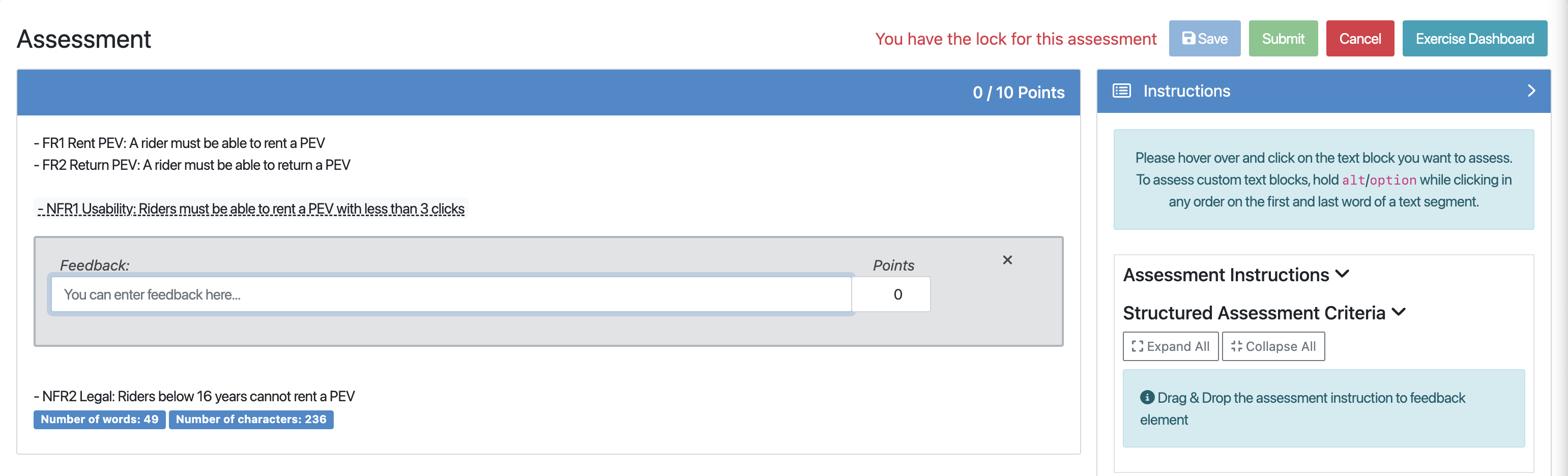

You can now start assessing text blocks by clicking on them. This opens an assessment dialog where you can assign points and provide feedback. To assess custom text blocks, hold alt/option while clicking in any order on the first and last word of a text segment.

Alternatively, you can also assess the text blocks by dragging and dropping assessment instructions from the Assessment Instructions section.

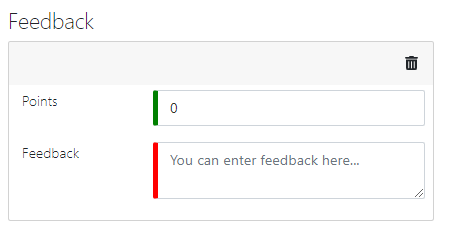

Feedback to the entire submission can also be added by clicking on the

button. The following form will open allowing you to input your feedback:

button. The following form will open allowing you to input your feedback:

If automatic assessment suggestions were enabled for the exercise, you would get available suggestions from the service Athena. More details about this service can be found in the following research papers:

Note

Jan Philip Bernius, Stephan Krusche, and Bernd Bruegge. Machine learning based feedback on textual student answers in large courses. Computers and Education: Artificial Intelligence, June 2022. doi:10.1016/j.caeai.2022.100081.

Jan Philip Bernius, Stephan Krusche, and Bernd Bruegge. A machine learning approach for suggesting feedback in textual exercises in large courses. In 8th ACM Conference on Learning @ Scale, L@S '21, 173–182. Association for Computing Machinery (ACM), June 2021. doi:10.1145/3430895.3460135.

Jan Philip Bernius. Toward computer-aided assessment of textual exercises in very large courses. In 52nd ACM Technical Symposium on Computer Science Education, SIGCSE '21, 1386. Association for Computing Machinery (ACM), March 2021. doi:10.1145/3408877.3439703.

Jan Philip Bernius, Anna Kovaleva, Stephan Krusche, and Bernd Bruegge. Towards the automation of grading textual student submissions to open-ended questions. In 4th European Conference of Software Engineering Education, ECSEE '20, 61–70. Association for Computing Machinery (ACM), June 2020. doi:10.1145/3396802.3396805.

Jan Philip Bernius, Anna Kovaleva, and Bernd Bruegge. Segmenting student answers to textual exercises based on topic modeling. In 17th Workshop on Software Engineering im Unterricht der Hochschulen, SEUH '20, 72–73. CEUR-WS.org, February 2020. URL: https://ceur-ws.org/Vol-2531/poster03.pdf.

Jan Philip Bernius and Bernd Bruegge. Toward the automatic assessment of text exercises. In 2nd Workshop on Innovative Software Engineering Education, ISEE '19, 19–22. CEUR-WS.org, February 2019. URL: https://ceur-ws.org/Vol-2308/isee2019paper04.pdf.

Once you’re done assessing the solution, you can either:

to save the incomplete assessment so that you can continue it afterward.

to save the incomplete assessment so that you can continue it afterward.