Programming Exercise¶

Content of this document

Overview¶

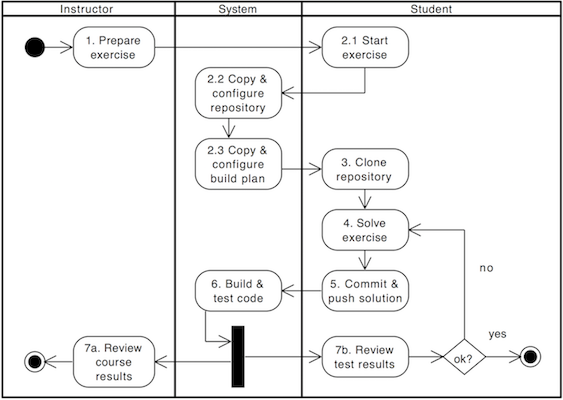

Conducting a programming exercise consists of 7 steps distributed among instructor, Artemis and students:

Instructor prepares exercise: Set up a repository containing the exercise code and test cases, build instructions on the CI server, and configures the exercise in Artemis.

Student starts exercise: Click on start exercise on Artemis which automatically generates a copy of the repository with the exercise code and configures a build plan accordingly.

Optional: Student clones repository: Clone the personalized repository from the remote VCS to the local machine.

Student solves exercise: Solve the exercise with an IDE of choice on the local computer or in the online editor.

Student uploads solution: Upload changes of the source code to the VCS by committing and pushing them to the remote server (or by clicking submit in the online editor).

CI server verifies solution: verify the student’s submission by executing the test cases (see step 1) and provide feedback which parts are correct or wrong.

Student reviews personal result: Reviews build result and feedback using Artemis. In case of a failed build, reattempt to solve the exercise (step 4).

Instructor reviews course results: Review overall results of all students, and react to common errors and problems.

The following activity diagram shows this exercise workflow.

Exercise Workflow¶

Setup¶

The following sections describe the supported features and the process of creating a new programming exercise.

Features¶

Artemis and its version control and continuous integration infrastructure is independent of the programming language and thus supports

teaching and learning with any programming language that can be compiled and tested on the command line.

Instructors have a lot of freedom in defining the environment (e.g. using build agents and Docker images) in which student code is executed and tested.

To simplify the setup of programming exercises, Artemis supports several templates that show how the setup works.

Instructors can still use those templates to generate programming exercises and then adapt and customize the settings in the repositories and build plans.

The support for a specific programming language

templatesdepends on the usedcontinuous integrationsystem. The table below gives an overview:Programming Language

Bamboo

Jenkins

Java

yes | yes

Python

yes | yes

C

yes | yes

Haskell

yes | yes

Kotlin

yes | no

VHDL

yes | no

Assembler

yes | no

Swift

yes | no

Not all

templatessupport the same feature set. Depending on the feature set, some options might not be available during the creation of the programming exercise. The table below provides an overview of the supported features:Programming Language

Sequential Test Runs

Static Code Analysis

Plagiarism Check

Package Name

Project Type

Solution Repository Checkout

Java

yes

yes

yes

yes

yes

no

Python

yes

no

yes

no

no

no

C

no

no

yes

no

no

no

Haskell

yes

no

no

no

no

yes

Kotlin

yes

no

no

yes

no

no

VHDL

no

no

no

no

no

no

Assembler

no

no

no

no

no

no

Swift

no

no

no

no

no

no

Sequential Test Runs:

Artemiscan generate a build plan which first executes structural and then behavioral tests. This feature can help students to better concentrate on the immediate challenge at hand.Static Code Analysis:

Artemiscan generate a build plan which additionally executes static code analysis tools.Artemiscategorizes the found issues and provides them as feedback for the students. This feature makes students aware of code quality issues in their submissions.Plagiarism Checks:

Artemisis able to automatically calculate the similarity between student submissions. A side-by-side view of similar submissions is available to confirm the plagiarism suspicion.Package Name: A package name has to be provided

Solution Repository Checkout: Instructors are able to compare a student submission against a sample solution in the solution repository

Note

Only some templates for Bamboo support Sequential Test Runs at the moment.

Note

Instructors are still able to extend the generated programming exercises with additional features that are not available in one specific template.

We encourage instructors to contribute improvements to the existing templates or to provide new templates. Please contact Stephan Krusche and/or create Pull Requests in the Github repository.

Exercise Creation¶

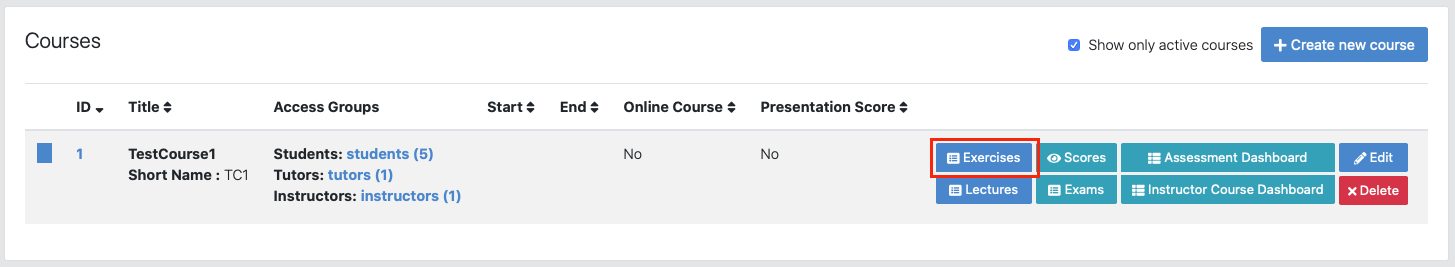

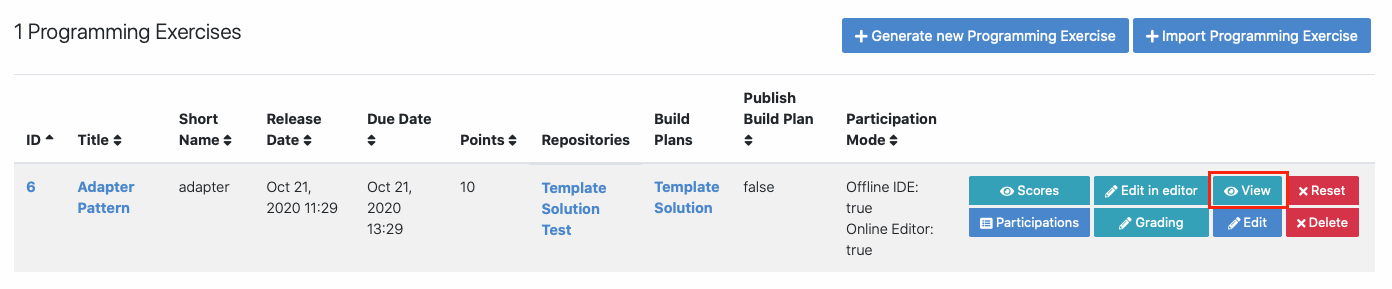

Open Course Management

Open

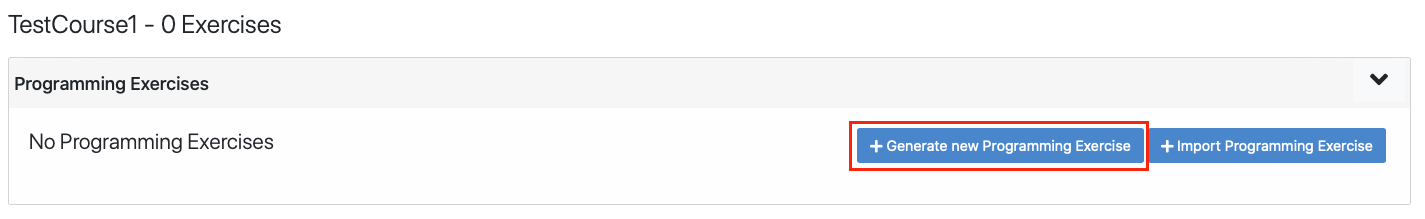

Navigate into Exercises of your preferred course

Generate programming exercise

Click on Generate new programming exercise

Artemis provides various options to customize programming exercises:

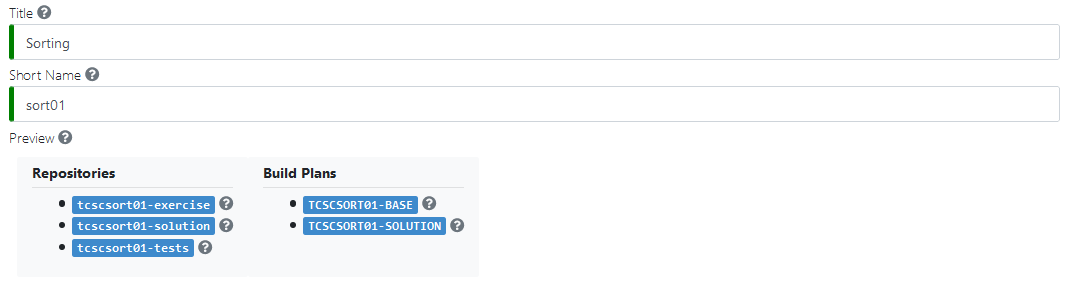

Title: The title of the exercise. It is used to create a project on the VCS server for the exercise. Instructors can change the title of the exercise after its creation.

Short Name: Together with the course short name, the exercise short name is used as a unique identifier for the exercise across Artemis (incl. repositories and build plans). The short name cannot be changed after the creation of the exercise.

Preview: Given the short name of the exercise and the short name of the course, Artemis displays a preview of the generated repositories and build plans.

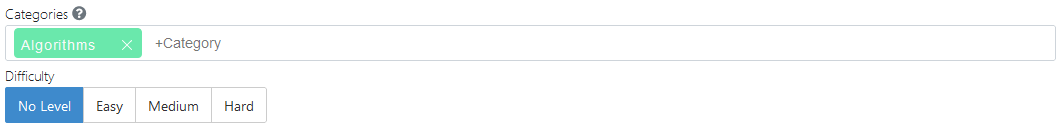

Categories: Instructors can freely define up to two categories per exercise. The categories are visible to students and should be used consistently to group similar kinds of exercises.

Difficulty: Instructors can give students information about the difficulty of the exercise.

Mode: The mode determines whether students work on the exercise alone or in teams. Cannot be changed after the exercise creation.

Team size: If

Teammode is chosen, instructors can additionally give recommendations for the team size. Instructors/Tutors define the teams after the exercise creation.

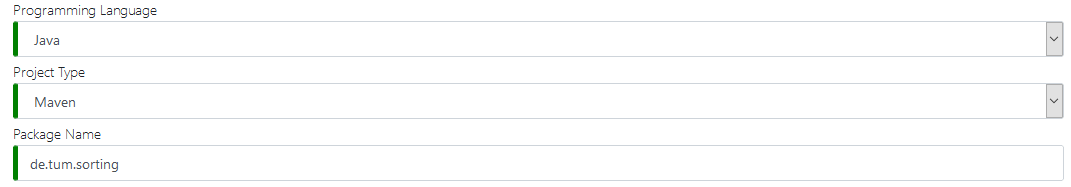

Programming Language: The programming language for the exercise. Artemis chooses the template accordingly. Refer to the programming exercise features for an overview of the supported features for each template.

Project Type: Determines the project structure of the template. Not available for all programming languages.

Package Name: The package name used for this exercise. Not available for all programming languages.

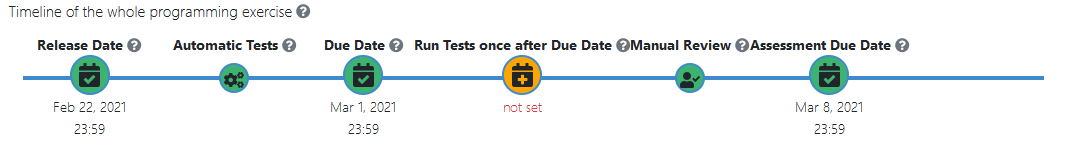

Release Date: Release date of the exercise. Students will only be able to participate in the exercise after this date.

Automatic Tests: Every commit of a participant triggers the execution of the tests in the Test repository. Excluded are tests, which are specified to run after the due date. This is only possible if Run Tests once after Due Date has been activated. The tests that only run after the due date are chosen in the grading configuration.

Due Date: The deadline for the exercise. Commits made after this date are not graded.

Note

Students can still commit code and receive feedback after the exercise due date, if manual review is not activated. The results for these submissions will not be rated.

Run Tests once after Due Date: Activate this option to build and test the latest in-time submission of each student on this date. This date must be after the due date. The results created by this test run will be rated.

Manual Review: Instructors/Tutors have to manually review the latest student submissions after the automatic tests were executed.

Assessment Due Date: The deadline for the manual reviews. On this date, all manual assessments will be released to the students.

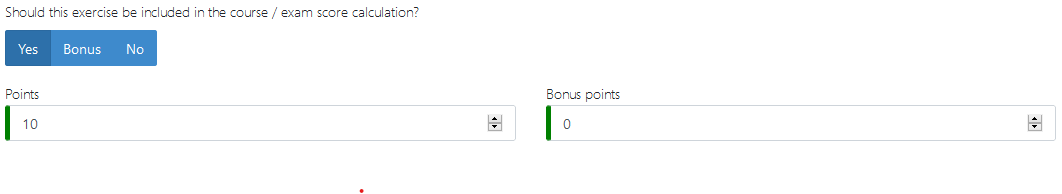

Should this exercise be included in the course / exam score calculation?

Yes: Instructors can define the maximum achievable Points and Bonus points for the exercise. The achieved total points will count towards the total course/exam scoreBonus: The achieved Points will count towards the total course/exam score as a bonus.No: The achieved Points will not count towards the total course/exam score.

Enable Static Code Analysis: Enable static code analysis for the exercise. The build plans will additionally execute static code analysis tools to find code quality issues in the submissions. This option cannot be changed after the exercise creation. Artemis provides a default configuration for the static code analysis tools but instructors are free to configure the static code analysis tools. Refer to the programming exercise features to see which programming languages support static code analysis.

Max Static Code Analysis Penalty: Available if static code analysis is active. Determines the maximum amount of points that can be deducted for code quality issues found in a submission as a percentage (between 0% and 100%) of Points. Defaults to 100% if left empty. Further options to configure the grading of code quality issues are available in the grading configuration.

Note

Given an exercise with 10 Points. If Max Static Code Analysis Penalty is 20%, at most 2 points will be deducted from the points achieved by passing test cases for code quality issues in the submission.

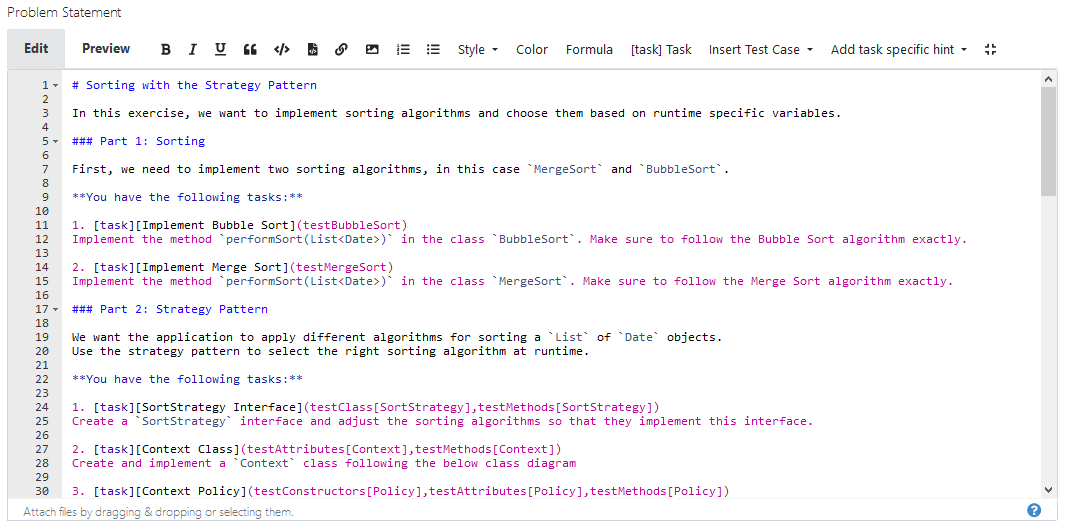

Problem Statement: The problem statement of the exercise. Refer to interactive problem statement for more information.

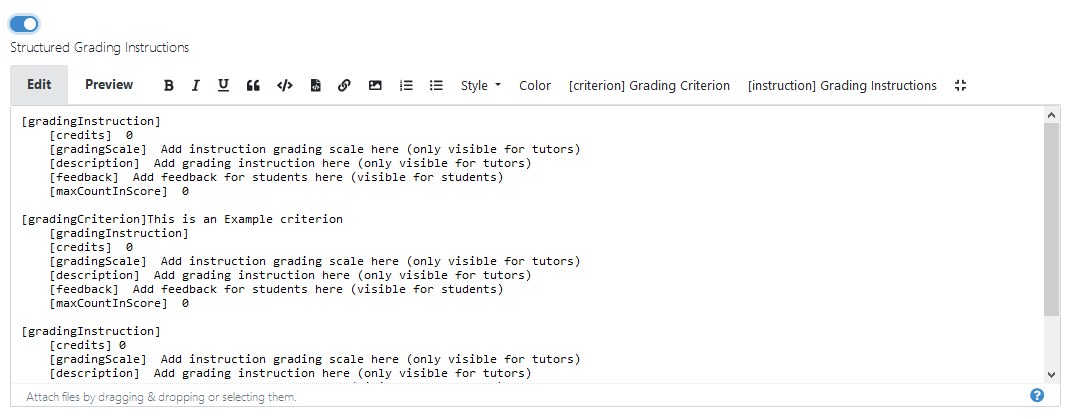

Grading Instructions: Available if Manual Review is active. Create instructions for the manual assessment of the exercise.

Sequential Test Runs: Activate this option to first run structural and then behavior tests. This feature allows students to better concentrate on the immediate challenge at hand. Not supported together with static code analysis. Cannot be changed after the exercise creation.

Check out repository of sample solution: Activate this option to checkout the solution into the ‘solution’ path. This option is useful to compare the student’s submission with the sample solution. This option is not available for all programming languages.

Allow Offline IDE: Allow students to clone their personal repository and work on the exercise with their preferred IDE.

Allow Online Editor: Allow students to work on the exercise using the Artemis Online Code Editor.

Note

At least one of the options Allow Offline IDE: and Allow Online Editor: must be active

Show Test Names to Students: Activate this option to show the names of the automated test cases to the students. If this option is disabled, students will not be able to visually differentiate between automatic and manual feedback.

Publish Build Plan: Allow students to access and edit their personal build plan. Useful for exercises where students should configure parts of the build plan themselves.

Click on

to create the exercise

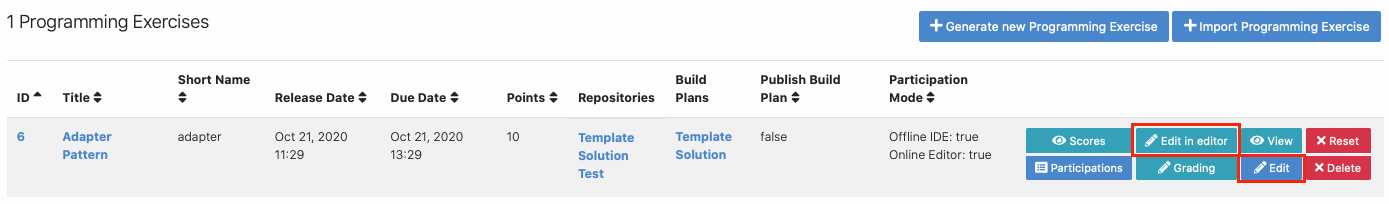

to create the exerciseResult: Programming Exercise

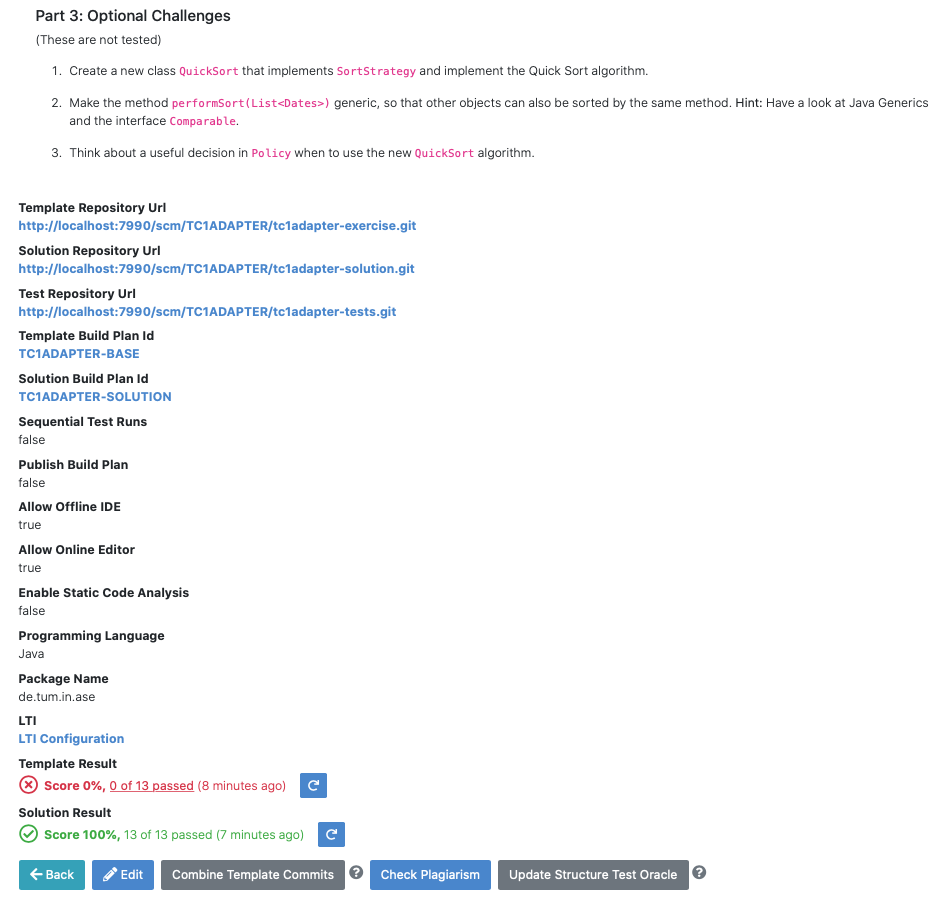

Artemis creates the repositories:

Template: template code, can be empty, all students receive this code at the beginning of the exercises

Test: contains all test cases, e.g. based on JUnit and optionally static code analysis configuration files. The repository is hidden for students

Solution: solution code, typically hidden for students, can be made available after the exercise

Artemis creates two build plans

Template: also called BASE, basic configuration for the test + template repository, used to create student build plans

Solution: also called SOLUTION, configuration for the test + solution repository, used to manage test cases and to verify the exercise configuration

Update exercise code in repositories

Alternative 1: Clone the 3 repositories and adapt the code on your local computer in your preferred development environment (e.g. Eclipse).

To execute tests, copy the template (or solution) code into a folder assignment in the test repository and execute the tests (e.g. using maven clean test)

Commit and push your changes

Notes for Haskell: In addition to the assignment folder, the executables of the build file expect the solution repository checked out in the solution subdirectory of the test folder and also allow for a template subdirectory to easily test the template on your local machine. You can use the following script to conveniently checkout an exercise and create the right folder structure:

#!/bin/sh # Arguments: # $1: exercise short name as specified on Artemis # $2: (optional) output folder name # # Note: you might want to adapt the `BASE` variable below according to your needs if [ -z "$1" ]; then echo "No exercise short name supplied." exit 1 fi EXERCISE="$1" if [ -z "$2" ]; then # use the exercise name if no output folder name is specified NAME="$1" else NAME="$2" fi # default base URL to repositories; change this according to your needs BASE="ssh://git@bitbucket.ase.in.tum.de:7999/$EXERCISE/$EXERCISE" # clone the test repository git clone "$BASE-tests.git" "$NAME" && \ # clone the template repository git clone "$BASE-exercise.git" "$NAME/template" && \ # clone the solution repository git clone "$BASE-solution.git" "$NAME/solution" && \ # create an assignment folder from the template repository cp -R "$NAME/template" "$NAME/assignment" && \ # remove the .git folder from the assignment folder rm -r "$NAME/assignment/.git/"

Alternative 2: Open

in Artemis (in the browser) and adapt the code in online code editor

in Artemis (in the browser) and adapt the code in online code editorYou can change between the different repos and submit the code when needed

Alternative 3: Use IntelliJ with the Orion plugin and change the code directly in IntelliJ

Edit in Editor

Check the results of the template and the solution build plan

They should not have the status

In case of a

result, some configuration is wrong, please check the build errors on the corresponding build plan.

result, some configuration is wrong, please check the build errors on the corresponding build plan.Hints: Test cases should only reference code, that is available in the template repository. In case this is not possible, please try out the option Sequential Test Runs

Optional: Adapt the build plans

The build plans are preconfigured and typically do not need to be adapted

However, if you have additional build steps or different configurations, you can adapt the BASE and SOLUTION build plan as needed

When students start the programming exercise, the current version of the BASE build plan will be copied. All changes in the configuration will be considered

Optional: Configure static code analysis tools

The Test repository contains files for the configuration of static code analysis tools, if static code analysis was activated during the creation/import of the exercise

The folder staticCodeAnalysisConfig contains configuration files for each used static code analysis tool

On exercise creation, Artemis generates a default configuration for each tool, which contains a predefined set of parameterized activated/excluded rules. The configuration files serve as a documented template that instructors can freely tailor to their needs.

On exercise import, Artemis copies the configuration files from the imported exercise

The following table depicts the supported static code analysis tools for each programming language, the dependency mechanism used to execute the tools and the name of their respective configuration files

Programming Language |

Execution Mechanism |

Supported Tools |

Configuration File |

|---|---|---|---|

Java |

Maven plugins (pom.xml) |

Spotbugs |

spotbugs-exclusions.xml |

Checkstyle |

checkstyle-configuration.xml |

||

PMD |

pmd-configuration.xml |

||

PMD Copy/Paste Detector (CPD) |

|||

Swift |

Script |

SwiftLint |

.swiftlint.yml |

Note

The Maven plugins for the Java static code analysis tools provide additional configuration options.

The build plans use a special task/script for the execution of the tools

Note

Instructors are able to completely disable the usage of a specific static code analysis tool by removing the plugin/dependency from the execution mechanism. In case of Maven plugins, instructors can remove the unwanted tools from the pom.xml. Alternatively, instructors can alter the task/script that executes the tools in the build plan. PMD and PMD CPD are a special case as both tools share a common plugin. To disable one or the other, instructors must delete the execution of a tool from the build plan.

Adapt the interactive problem statement

Click the

button of the programming exercise or navigate into

button of the programming exercise or navigate into  and adapt the interactive problem statement.

and adapt the interactive problem statement.The initial example shows how to integrate tasks, link tests and integrate interactive UML diagrams

Configure Grading

General Actions

Save the current grading configuration of the open tab

Save the current grading configuration of the open tab Reset the current grading configuration of the open tab to the default values. For Test Case Tab, all test cases are set to weight 1, bonus multiplier 1 and bonus points 0. For the Code Analysis Tab, the default configuration depends on the selected programming language.

Reset the current grading configuration of the open tab to the default values. For Test Case Tab, all test cases are set to weight 1, bonus multiplier 1 and bonus points 0. For the Code Analysis Tab, the default configuration depends on the selected programming language. Re-evaluates all scores according to the currently saved settings using the individual feedback stored in the database

Re-evaluates all scores according to the currently saved settings using the individual feedback stored in the database Trigger all build plans. This leads to the creation of new results using the updated grading configuration

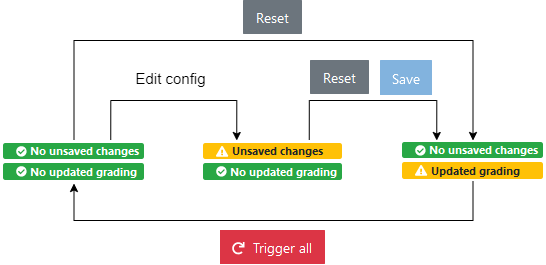

Trigger all build plans. This leads to the creation of new results using the updated grading configurationTwo badges display if the current configuration has been saved yet and if the grading was changed. The following graphic visualizes how each action affects the grading page state:

Warning

Artemis always grades new submissions with the latest configuration but existing submissions might have been graded with an outdated configuration. Artemis warns instructors about grading inconsistencies with the Updated grading badge.

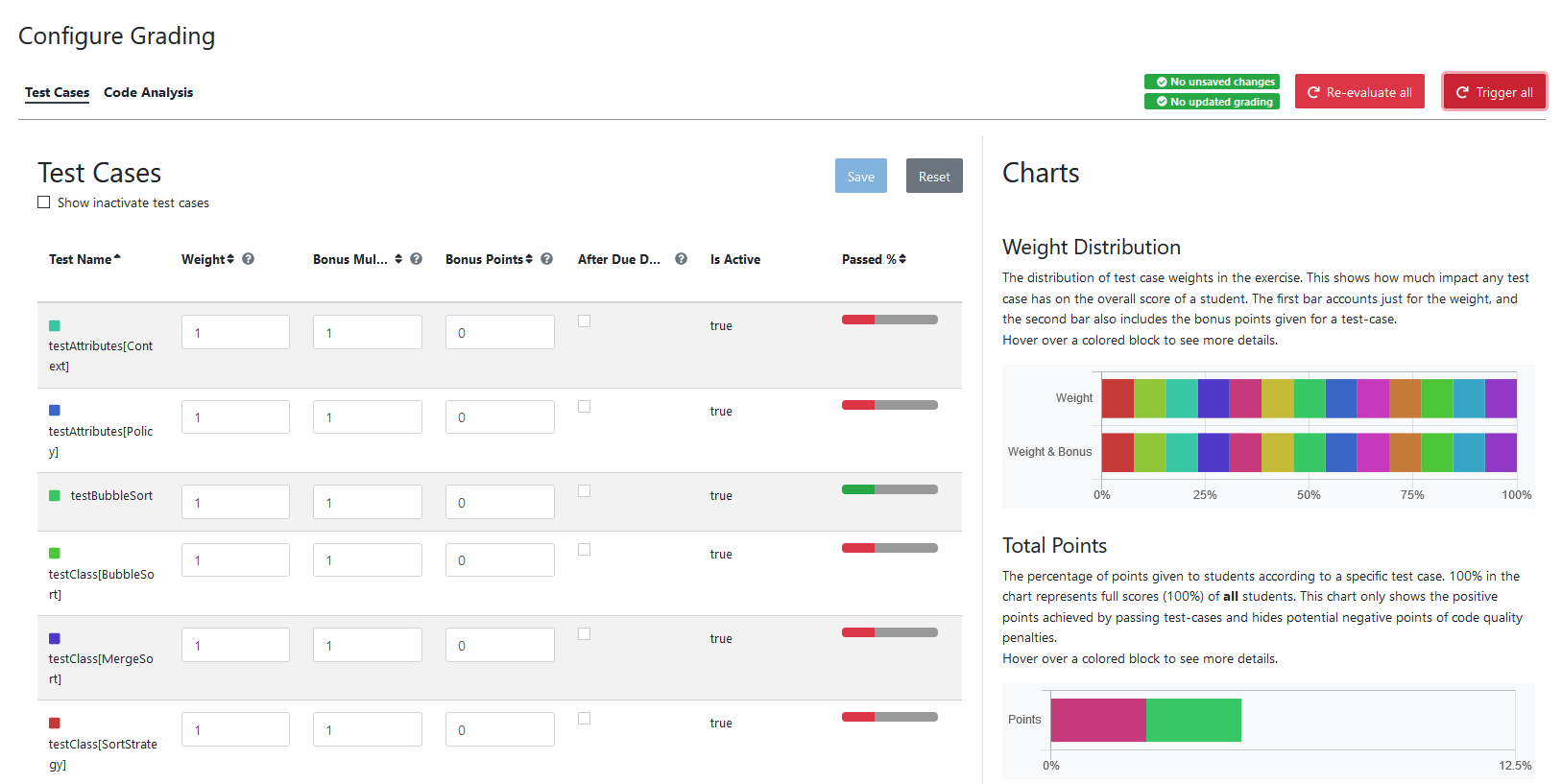

Test Case Tab: Adapt the contribution of each test case to the overall score

Note

Artemis registers the test cases defined in the Test repository using the results generated by Solution build plan. The test cases are only shown after the first execution of the Solution build plan.

On the left side of the page, instructors can configure the test case settings:

Test Name: Name of the test case as defined in Test repository

Weight: The points for a test case are proportional to the weight (sum of all weights as the denominator) and are calculated as a fraction of the maximum points

Note

Bonus points for an exercise (implied by a score higher than 100%) are only achievable if at least one bonus multiplier is greater than 1 or bonus points are given for a test case

Bonus multiplier: Allows instructors to multiply the points for passing a test case without affecting the points rewarded for passing other test cases

Bonus points: Adds a flat point bonus for passing a test case

After Due Date: Select test cases that should only be executed after the due date passed. This option is only available if the Timeline of the whole programming exercise (available during exercise creation, edit, import option) includes Run Tests once after Due Date

Is Active: Displays whether the test case is currently part of the grading configuration. The Show inactivate test cases controls whether inactive test cases are displayed

Passed %: Displays statistics about the percentage of participating students that passed or failed the test case

Note

Example 1: Given an exercise with 3 test cases, maximum points of 10 and 10 achievable bonus points. The highest achievable score is \(\frac{10+10}{10}*100=200\%\). Test Case (TC) A has weight 2, TC B and TC C have weight 1 (bonus multipliers 1 and bonus points 0 for all test cases). A student that only passes TC A will receive 50% of the maximum points (5 points).

Note

Example 2: Given the configuration of Example 1 with an additional bonus multiplier of 2 for TC A. Passing TC A accounts for \(\frac{2*2}{2+1+1}*100=100\%\) of the maximum points (10). Passing TC B or TC C accounts for \(\frac{1}{4}*100=25%\) of the maximum points (2.5). If the student passes all test cases he will receive a score of 150%, which amounts to 10 points and 5 bonus points.

Note

Example 3: Given the configuration of Example 2 with additional bonus points of 5 for TC B. The points achieved for passing TC A and TC C do not change. Passing TC B now accounts for 2.5 points plus 5 bonus points (7.5). If the student passes all test cases he will receive 10 (TC A) + 7.5 (TC B) + 2.5 (TC C) points, which amounts to 10 points and 10 bonus points and a score of 200%.

On the right side of the page, charts display statistics about the current test case configuration. If changes are made to the configuration, a

of the statistics is shown.

of the statistics is shown.Weight Distribution: The distribution of test case weights. Visualizes the impact of each test case for the score calculation

Total Points: The percentage of points given to students according to a specific test case. 100% in the chart represents full scores (100%) of all students

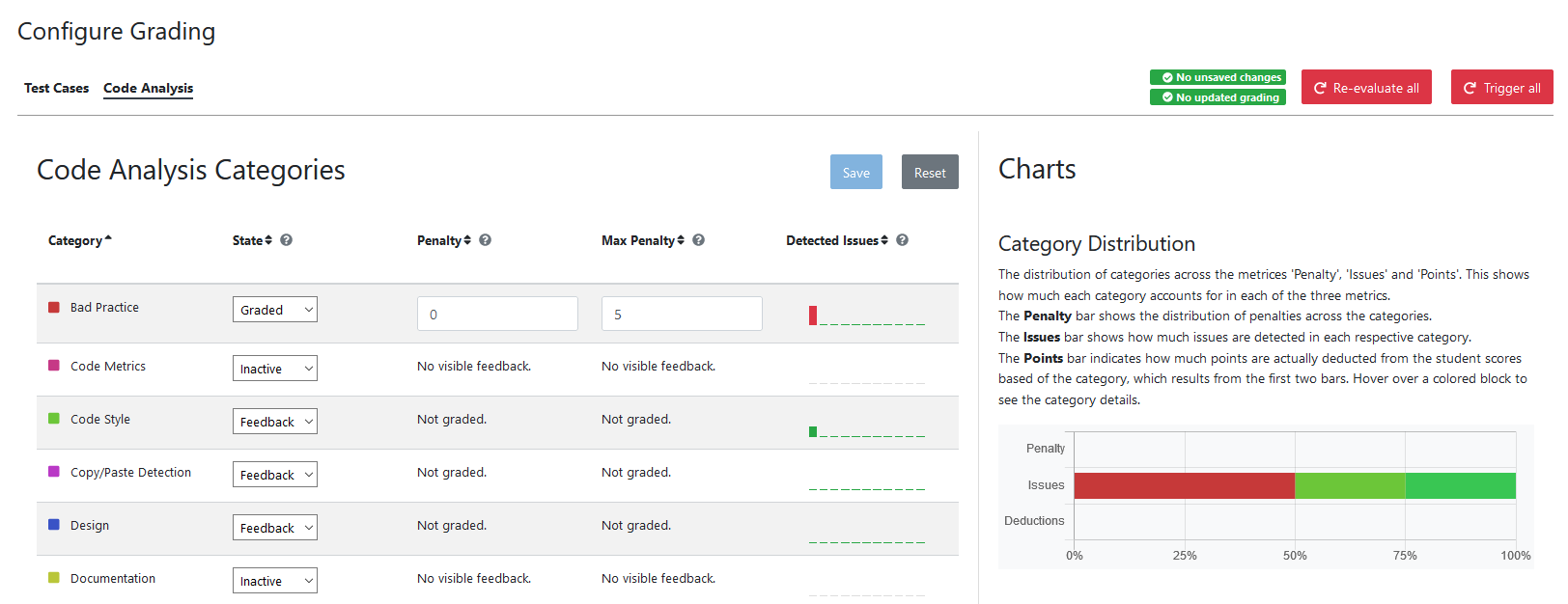

Code Analysis Tab: Configure the visibility and grading of code quality issues on a category-level

Note

The Code Analysis Tab is only available if static code analysis was activated for the exercise.

Code quality issues found during the automatic assessment of a submission are grouped into categories. Artemis maps categories defined by the static code analysis tools to Artemis categories according to the following table:

Mapping |

|||

|---|---|---|---|

Category |

Description |

Java |

Swift |

Bad Practice |

Code that violates recommended and essential coding practices |

Spotbugs BAD_PRACTICE |

|

Spotbugs I18N |

|||

PMD Best Practices |

|||

Code Style |

Code that is confusing and hard to maintain |

Spotbugs STYLE |

Swiftlint (all rules) |

Checkstyle blocks |

|||

Checkstyle coding |

|||

Checkstyle modifier |

|||

PMD Code Style |

|||

Potential Bugs |

Coding mistakes, error-prone code or threading errors |

Spotbugs CORRECTNESS |

|

Spotbugs MT_CORRECTNESS |

|||

PMD Error Prone |

|||

PMD Multithreading |

|||

Duplicated Code |

Code clones |

PMD CPD |

|

Security |

Vulnerable code, unchecked inputs and security flaws |

Spotbugs MALICIOUS_CODE |

|

Spotbugs SECURITY |

|||

PMD Security |

|||

Performance |

Inefficient code |

Spotbugs PERFORMANCE |

|

PMD Performance |

|||

Design |

Program structure/architecture and object design |

Checkstyle design |

|

PMD Design |

|||

Code Metrics |

Violations of code complexity metrics or size limitations |

Checkstyle metrics |

|

Checkstyle sizes |

|||

Documentation |

Code with missing or flawed documentation |

Checkstyle javadoc |

|

Checkstyle annotation |

|||

PMD Documentation |

|||

Naming & Format |

Rules that ensure the readability of the source code (name conventions, imports, indentation, annotations, white spaces) |

Checkstyle imports |

|

Checkstyle indentation |

|||

Checkstyle naming |

|||

Checkstyle whitespace |

|||

Miscellaneous |

Uncategorized rules |

Checkstyle miscellaneous |

|

Note

For Swift, only the category Code Style can contain code quality issues currently. All other categories displayed on the grading page are dummies.

On the left side of the page, instructors can configure the static code analysis categories.

Category: The name of category defined by Artemis

State:

INACTIVE: Code quality issues of an inactive category are not shown to students and do not influence the score calculation

FEEDBACK: Code quality issues of a feedback category are shown to students but do not influence the score calculation

GRADED: Code quality issues of a graded category are shown to students and deduct points according to the Penalty and Max Penalty configurationPenalty: Artemis deducts the selected amount of points for each code quality issue from points achieved by passing test cases

Max Penalty: Limits the amount of points deducted for code quality issues belonging to this category

Detected Issues: Visualizes how many students encountered a specific number of issues in this category

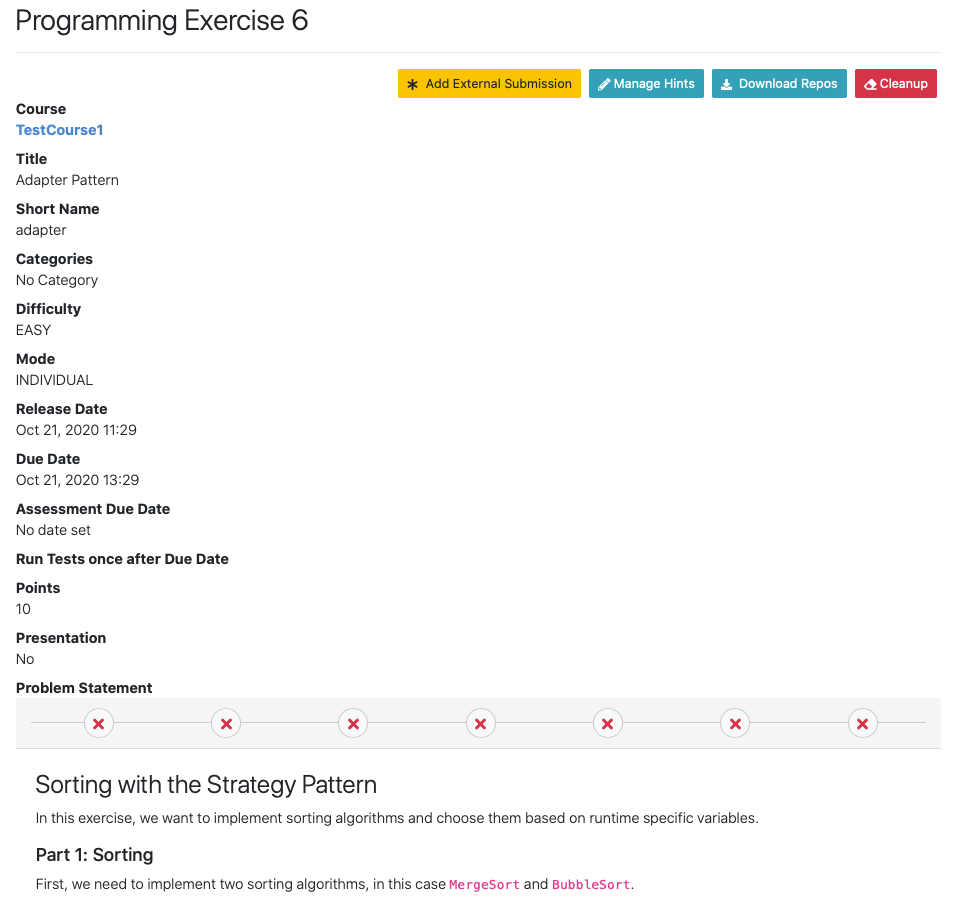

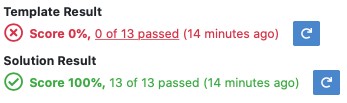

Verify the exercise configuration

Open the

page of the programming exercise

page of the programming exercise

The template result should have a score of 0% with 0 of X passed or 0 of X passed, 0 issues (if static code analysis is enabled)

The solution result should have a score of 100% with X of X passed or X of X passed, 0 issues (if static code analysis is enabled)

Note

If static code analysis is enabled and issues are found in the template/solution result, instructors should improve the template/solution or disable the rule, which produced the unwanted/unimportant issue.

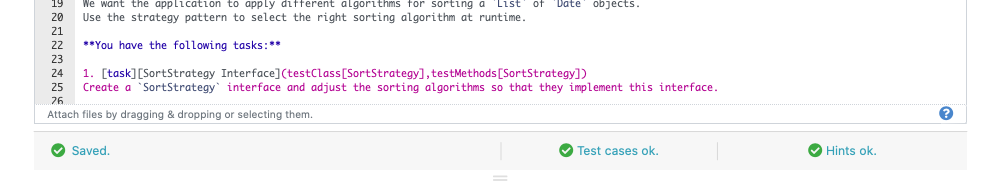

Click on

Below the problem statement, you should see Test cases ok and Hints ok

Exercise Import¶

On exercise import, Artemis copies the repositories, build plans, interactive problem statement and grading configuration from the imported exercise.

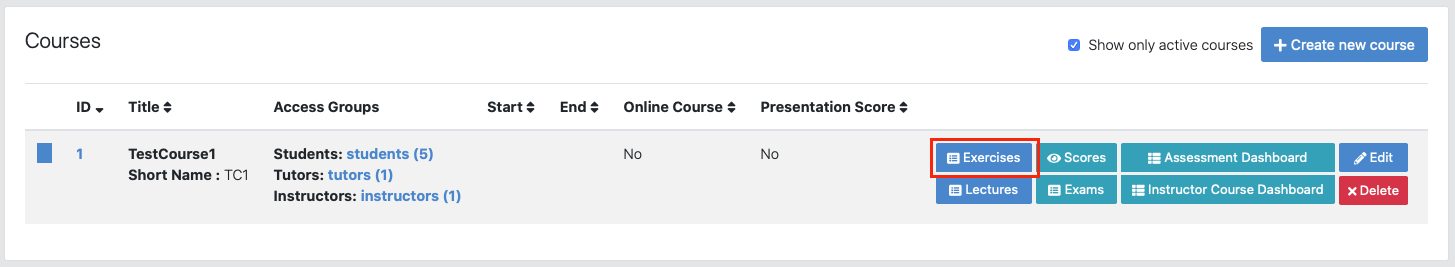

Open Course Management

Open

Navigate into Exercises of your preferred course

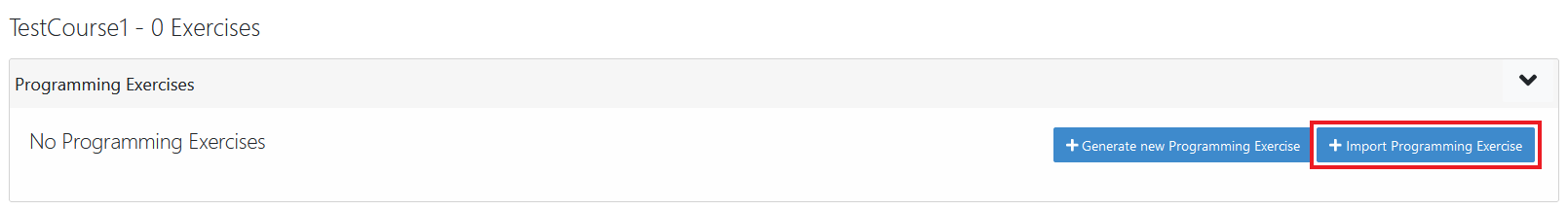

Import programming exercise

Click on Import Programming Exercise

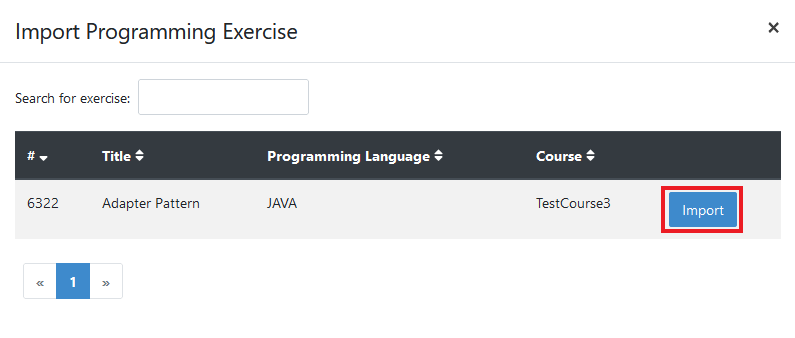

Select an exercise to import

Note

Instructors can import exercises from courses, where they are registered as instructors

Artemis provides special options to update the assessment process

Recreate Build Plans: Create new build plans instead of copying them from the imported exercise

Update Template: Update the template files in the repositories. This can be useful if the imported exercise is old and contains outdated dependencies. For Java, Artemis replaces JUnit4 by Ares (which includes JUnit5) and updates the dependencies and plugins with the versions found in the latest template. Afterwards you might need to adapt the test cases.

Instructors are able to activate/deactivate static code analysis. Changing this option from the original value, requires the activation of Recreate Build Plans and Update Template.

Note

Recreate Build Plans and Update Template are automatically set if the static code analysis option changes compared to the imported exercise. The plugins, dependencies and static code analysis tool configurations are added/deleted/copied depending on the new and the original state of this option.

Fill out all mandatory values and click on

Note

The interactive problem statement can be edited after finishing the import. Some options such as Sequential Test Runs cannot be changed on exercise import.

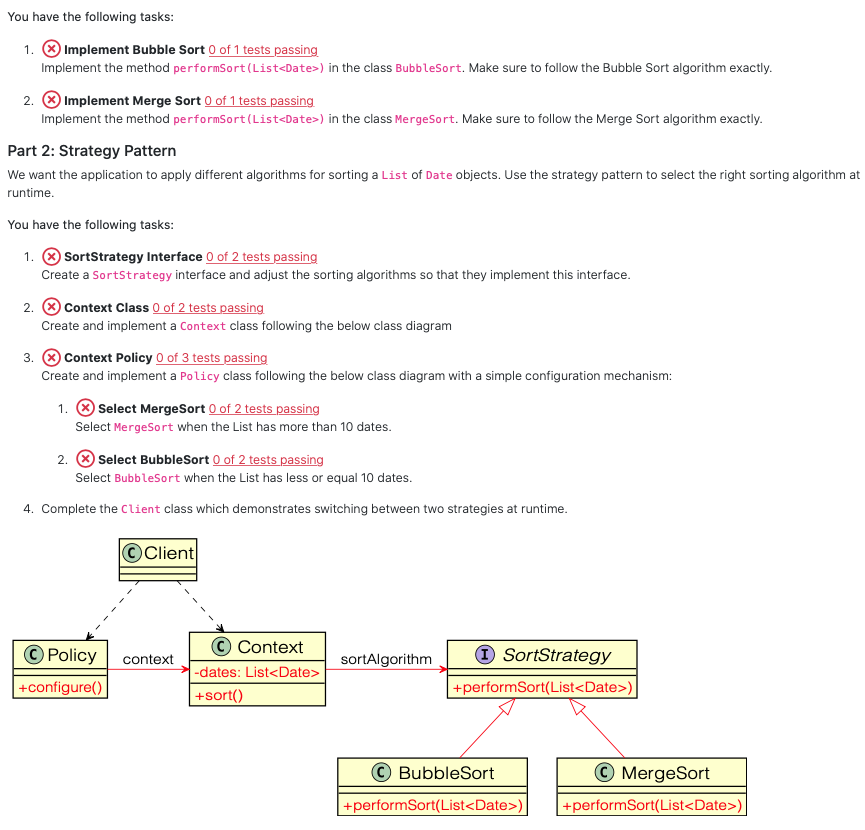

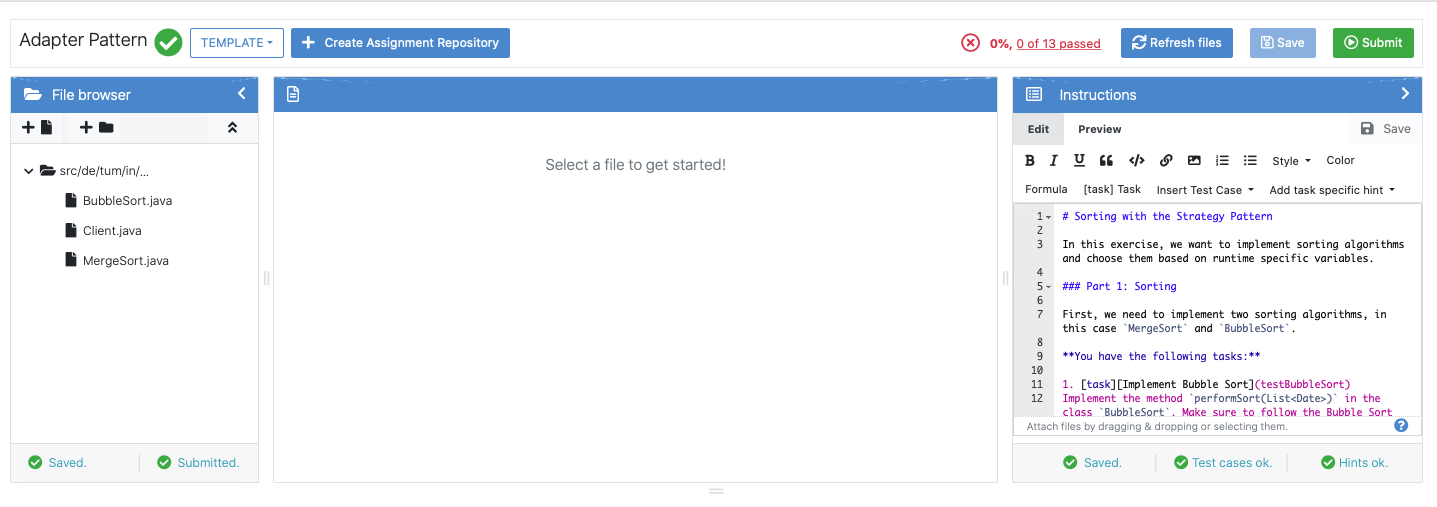

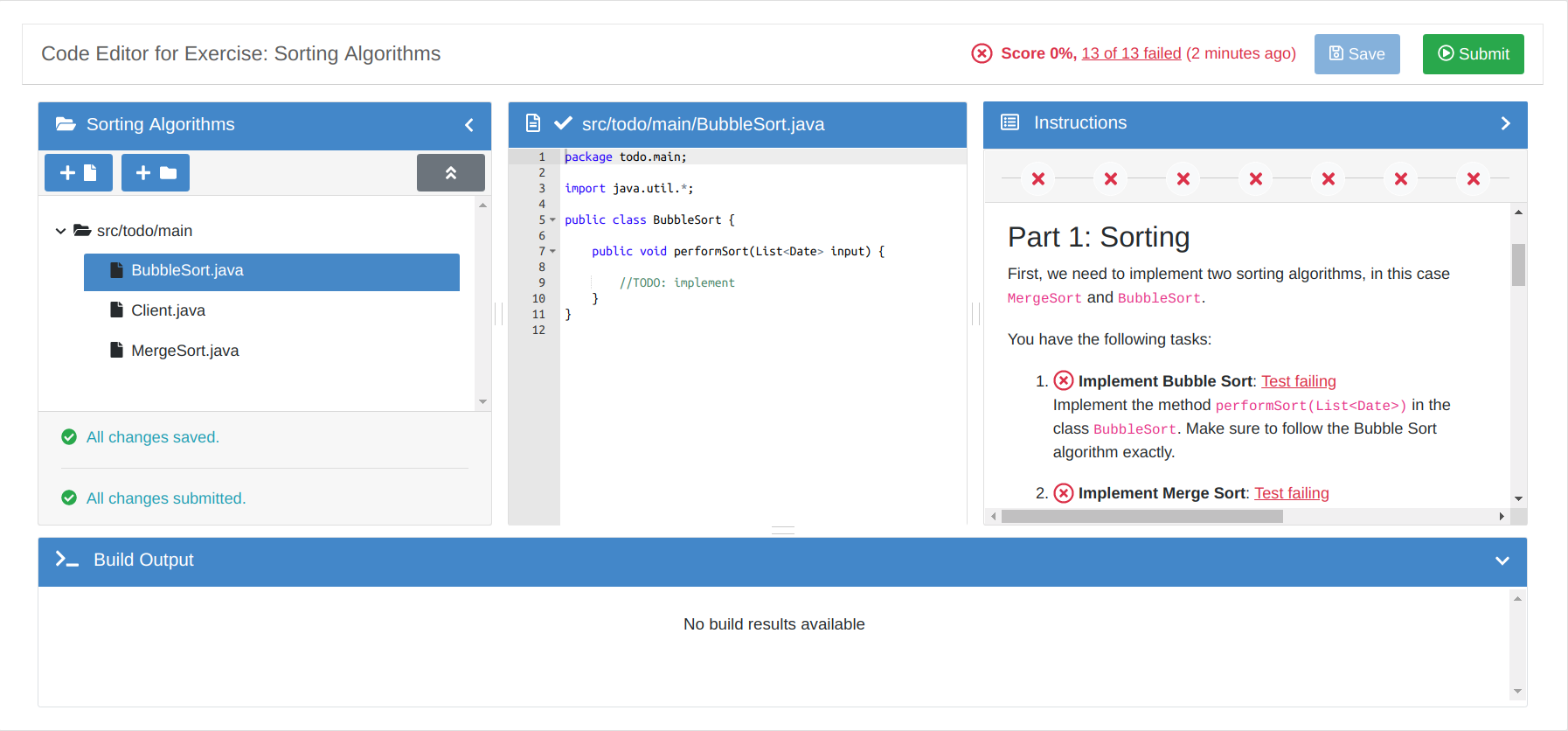

Online Editor¶

The following screenshot shows the online code editor with interactive and dynamic exercise instructions on the right side. Tasks and UML diagram elements are referenced by test cases and update their color from red to green after students submit a new version and all test cases associated with a task or diagram element pass. This allows the students to immediately recognize which tasks are already fulfilled and is particularly helpful for programming beginners.

Online Editor¶

Testing with Artemis Java Test Sandbox¶

Artemis Java Test Sandbox (abbr. AJTS) is a JUnit 5 extension for easy and secure Java testing on Artemis.

Its main features are

a security manager to prevent students crashing the tests or cheating

more robust tests and builds due to limits on time, threads and io

support for public and hidden Artemis tests, where hidden ones obey a custom deadline

utilities for improved feedback in Artemis like processing multiline error messages or pointing to a possible location that caused an Exception

utilities to test exercises using System.out and System.in comfortably

For more information see AJTS GitHub